Remove Object

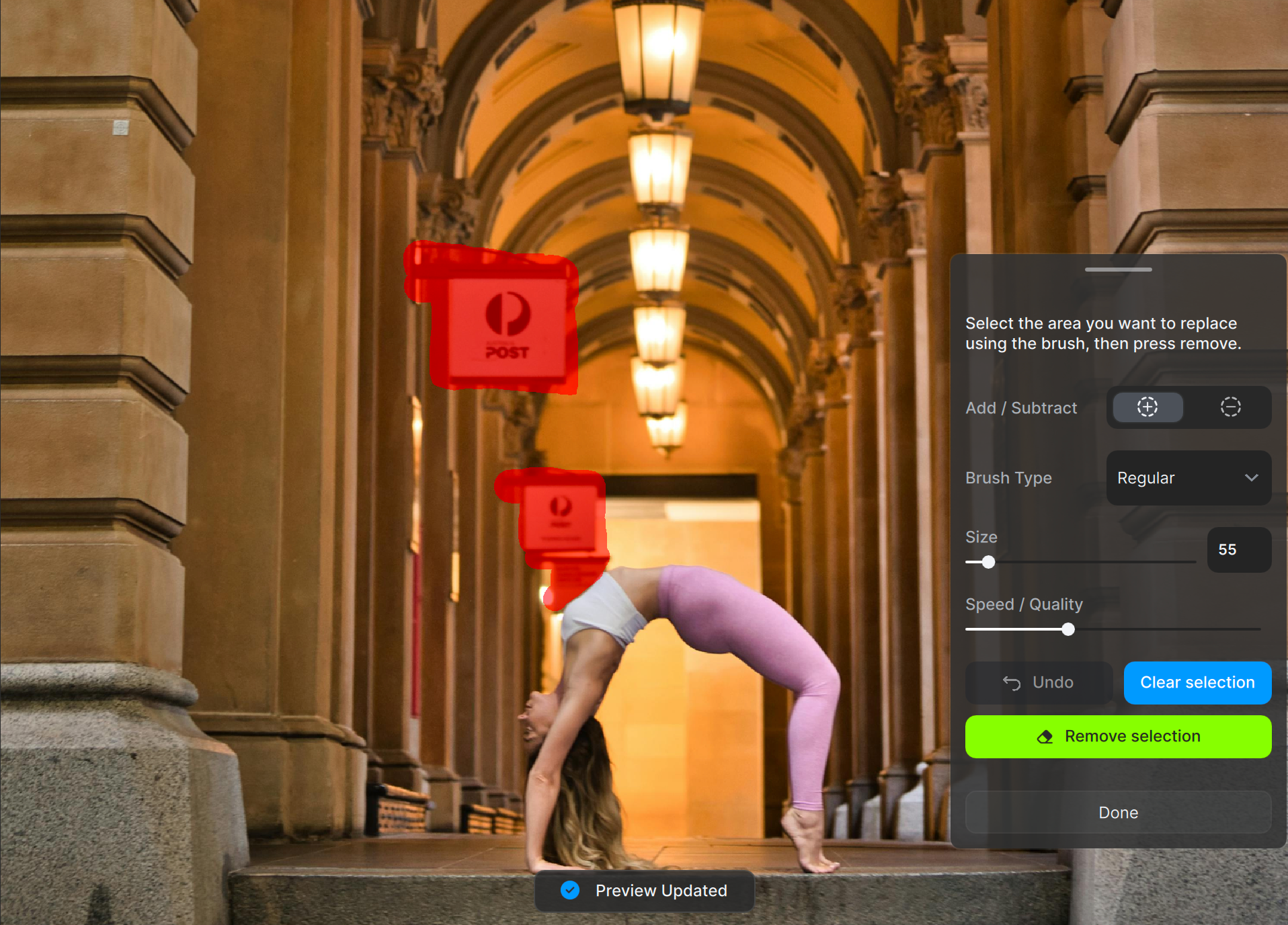

The Remove Tool uses generative AI to eliminate unwanted objectives from your images. By analyzing the surrounding context, it not only erases the object but also generates a background that blends with the rest of the image.

System Requirements

Due to the resource-intensive nature of this feature, it is currently not supported on Macs with AMD GPUs. It also does not work on MacOS 12 (Monterey) and below.

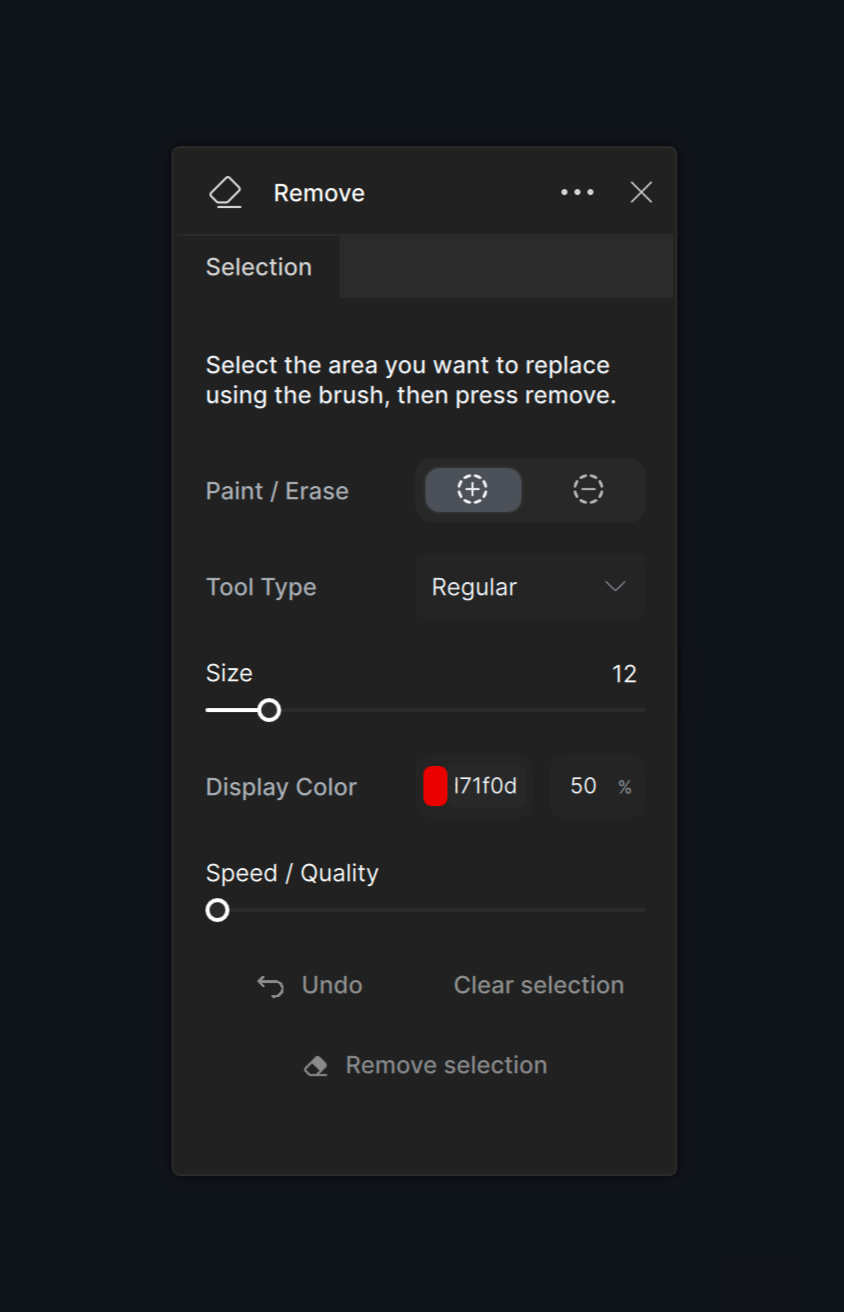

Controls

Use the Controls tab to adjust the brush and control the processing.

Mask Settings

Add/Subtract

Switch between the add and subtract mask buttons to refine the mask if necessary.

Brush Type

Choose between the Regular brush and the Object Selection brush. The Object Selection brush identifies and selects entire objects.

Speed / Quality Slider

The Remove Tool's performance depends on the computer's system profile and the selected editing priorities. Prioritizing speed conserves resources and reduces processing steps on weaker computers while prioritizing quality yields optimal results when hardware performance is not a constraint.

Click the Undo button to undo the most recent removal process. Click the Clear Selection button to erase the mask. Hit the Remove Selection button once you are satisfied with the mask to remove the object.

Best Practices

Size & Distance

Keep mask sizes below ¼ of the total image size. Process each object individually when dealing with multiple objects requiring separate masks. Ensure that the maximum distance between masked objects does not exceed 2000 pixels.

Inclusion

Mask the entire object rather than a partial section. Include the shadows and reflections of the objects. If those are not removed, the AI model may attempt to replace the object with something similar.